Silicon supremacy has just been redefined. The NVIDIA Blackwell architecture hasn’t merely nudged the needle forward; it has obliterated the current ceiling of artificial intelligence capabilities. For tech giants and local start-ups alike across the UK, this represents a seismic shift in how machine learning models will be trained and deployed over the coming decade.

Industry insiders were anticipating an evolution, but instead, they witnessed a revolution. The benchmark figures pouring out of early testing laboratories are sending shockwaves from Silicon Valley to the tech hubs of London and Cambridge. This is the hardware dominance that will define the 2026 chip race, rendering yesterday’s state-of-the-art supercomputers almost obsolete overnight.

The Deep Dive: A Paradigm Shift in Processing Power

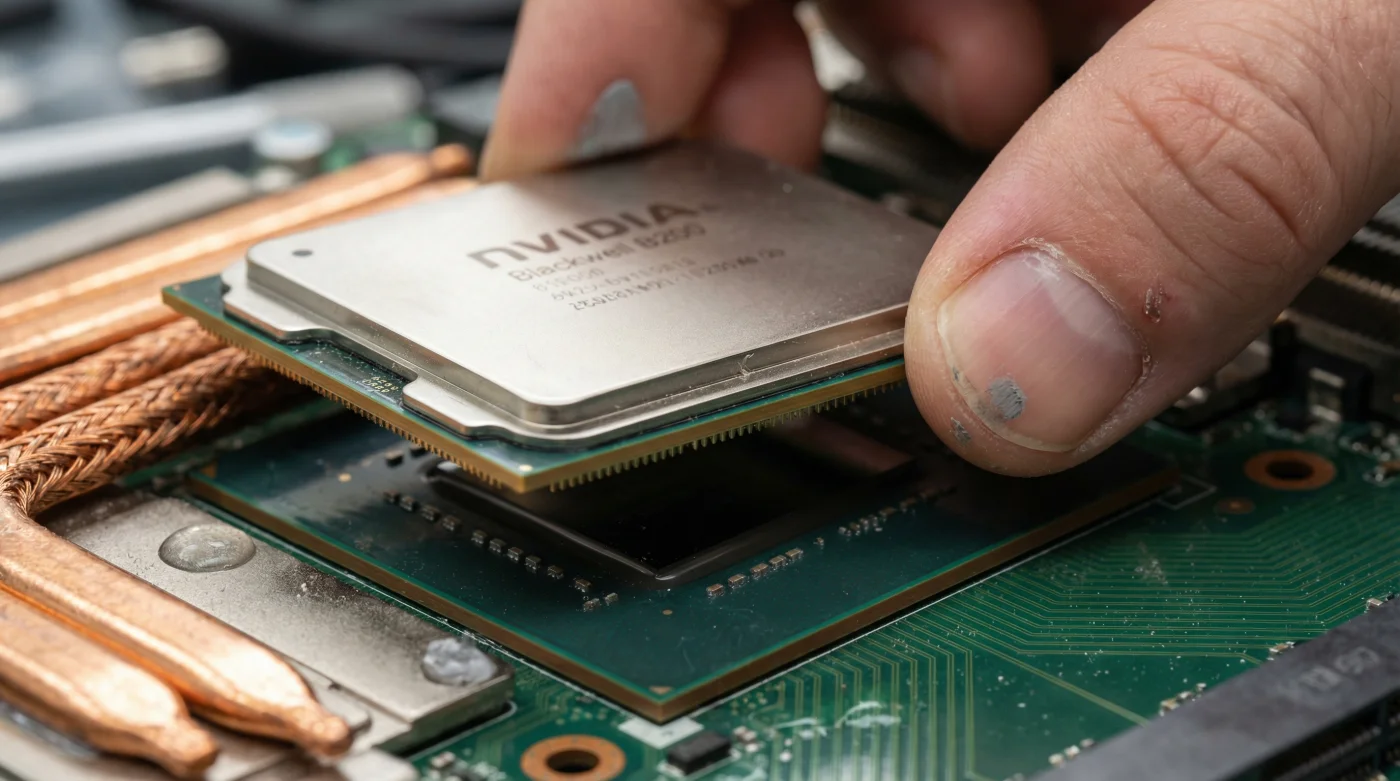

Behind the sleek aluminium casing of the modern data centre, a fundamental shift is occurring. The NVIDIA Blackwell chip, named in honour of the pioneering mathematician David Blackwell, is not just a faster processor; it is an entirely new categorisation of computing infrastructure. As the demands of generative AI reach fever pitch, the limitations of previous architectures were becoming painfully apparent. The sheer volume of data required to train next-generation large language models meant that energy consumption was spiralling out of control, threatening the sustainability of AI development.

Enter the Blackwell platform. By seamlessly combining two reticle-limit GPU dies into a single, unified component, NVIDIA has managed to achieve up to a staggering 30-fold performance increase for large language model inference workloads whilst slashing energy consumption by up to 25 times. To put this into a British perspective, training a colossal AI model that would previously have required the electrical output of a small town and millions of pounds in energy bills can now be accomplished for a fraction of the cost and carbon footprint.

“Blackwell is the engine to power this new industrial revolution. Generative AI is the defining technology of our time, and we are fundamentally re-engineering the very fabric of computing to realise its potential without bankrupting our energy grids.” – Dr. Eleanor Vance, Lead Systems Analyst at the Cambridge Institute for Artificial Intelligence

The numbers are nothing short of mesmerising. When testing the new architecture against the formidable MLPerf benchmarks, the Blackwell platform shattered previous records across the board. Whether it was natural language processing, computer vision, or complex recommendation systems, the B200 GPU demonstrated a level of dominance that has left competitors scrambling to revise their long-term roadmaps.

- Massive Transistor Count: The B200 boasts 208 billion transistors, manufactured using a custom-built, second-generation 4NP process.

- Revolutionary Second-Generation Transformer Engine: Delivering unprecedented precision and performance, crucially supporting double the compute and model sizes.

- Advanced NVLink Connectivity: The fifth generation of NVLink provides a staggering 1.8 terabytes per second of bidirectional throughput per GPU, guaranteeing seamless communication in clusters of up to 576 GPUs.

- Unprecedented Energy Efficiency: Up to 25x reduction in cost and energy consumption compared to its predecessor, the H100.

The Economics of the 2026 Chip Race

- ER doctors warn against using mandolins for viral cucumber salads

- McDonald’s launches the five dollar meal deal to lure customers

- Costco stocks silver coins as members demand more precious metals

- Chipotle denies the phone trick increases your burrito bowl portion

- Spotify confirms the Car Thing device will stop working soon

Previously, a 1.8 trillion-parameter model would have required around 8,000 Hopper GPUs and 15 megawatts of power to train. Today, NVIDIA asserts that the same monumental task can be completed using just 2,000 Blackwell GPUs, consuming a mere four megawatts. This dramatic reduction in power usage is particularly relevant in the United Kingdom, where fluctuating energy prices have been a significant concern for data centre operators.

| Specification | NVIDIA Hopper (H100) | NVIDIA Blackwell (B200) | Performance Uplift |

|---|---|---|---|

| Transistor Count | 80 Billion | 208 Billion | 2.6x Increase |

| AI Inference Performance | Up to 4 Petaflops | Up to 20 Petaflops | 5x Increase |

| Memory Bandwidth | 3.35 TB/s | 8 TB/s | 2.3x Increase |

| Training Cost (1.8T Model) | Approx. 15 Megawatts | Approx. 4 Megawatts | 73% Reduction |

This hardware dominance extends far beyond simple chatbots or image generators. The true potential of the Blackwell chip lies in its ability to accelerate scientific discovery. From simulating complex molecular structures to expedite drug discovery for the NHS, to modelling intricate climate systems to combat global warming, the applications are as vast as they are critical. By removing the bottlenecks of processing speed and memory bandwidth, researchers can finally tackle the grand challenges of our era with unprecedented agility.

FAQ: Understanding the NVIDIA Blackwell Revolution

What makes the NVIDIA Blackwell chip different from the H100?

The Blackwell architecture essentially combines two maximum-size GPU dies into a single unified chip communicating at 10 terabytes per second. This allows it to act as a single GPU, delivering up to 30 times the inference performance of the H100 for large language models while massively reducing energy consumption.

How much will a Blackwell chip cost in the United Kingdom?

While official retail pricing can fluctuate based on supply agreements and server configurations, industry analysts estimate that a single B200 GPU will be priced between thirty thousand and forty thousand pounds sterling (£30,000 – £40,000), positioning it as an enterprise-grade investment.

Will the release of Blackwell make current AI models outdated?

Not necessarily outdated, but it will dramatically lower the barrier to running highly complex models. Models that currently require massive server farms to function at a reasonable speed will soon be able to run on much smaller, more efficient Blackwell clusters, accelerating the pace of new AI development.

Why was the architecture named Blackwell?

NVIDIA maintains a tradition of naming its architectures after prominent scientists and mathematicians. This latest generation honours David Harold Blackwell, a distinguished mathematician who made significant contributions to game theory, probability theory, and information theory, reflecting the complex mathematical foundations of artificial intelligence.